Putting in the work to sort reliable info on the internet from the not so reliable requires an amount of time that most of us just don’t have available. With the subsequent pressures of COVID-19 weighing on society, that time is a more valuable resource than ever.

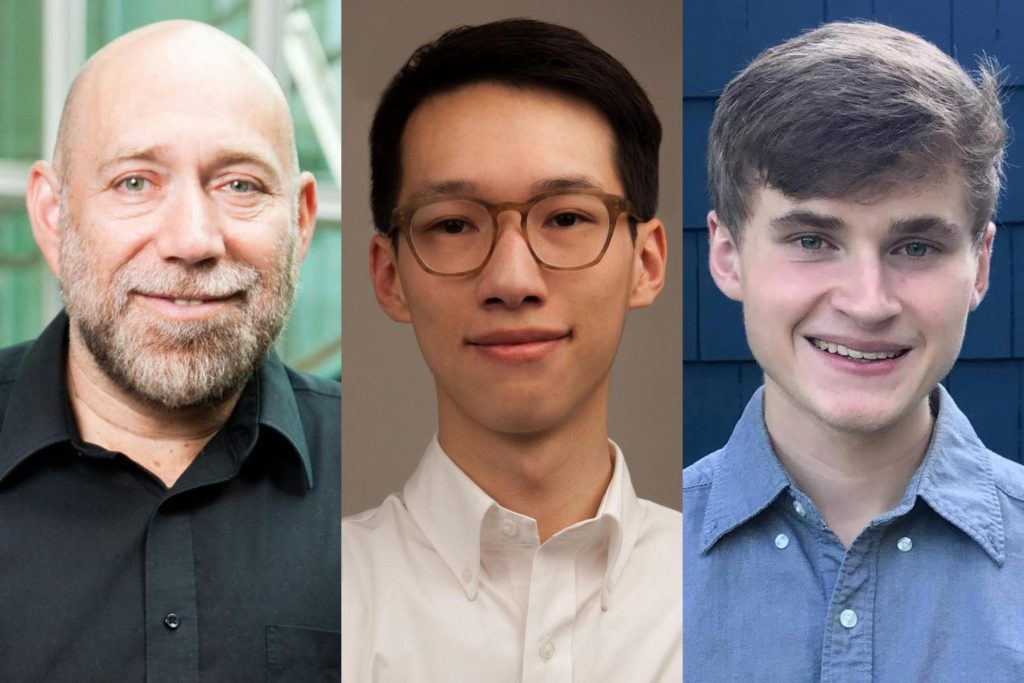

That’s why CIS’ own Dan Roth, together with his team at the Cognitive Computation Group, have created the Penn Information Pollution Project.

In a Penn Today article, Science News Officer Erica K. Brockmeier described the Penn Information Pollution Project as “an online platform to help users find relevant and trustworthy information about the novel coronavirus.” The platform relies on natural language processing to not only analyze a source’s amount of credibility, but also identify the multiple perspectives that could serve as viable answers.

But the intricacy of language itself presents difficulties.

‘Language is ambiguous. Every word, depending on context, could mean completely different things,’ says Roth in the article. ‘And language is variable. Everything you want to say, you can say in different ways. To automate this process, we have to get around these two key difficulties, and this is where the challenge is coming from.’

Read the full article HERE

Additional information and resources on COVID-19 are available at https://coronavirus.upenn.edu/.